Although I don’t expect to agree with it, I think I should look at Eliezer Yudkowsky’s new book about AI killing all humans. https://ifanyonebuildsit.com

Eliezer Yudkowsky writes Only Law Can Prevent Extinction

Elon Musk’s actual stated plan for Grok, grown on some of the largest datacenters in the world, is that he need only build a superintelligence that values Truth, and then it will keep humans alive as useful truth-generators. That he hasn’t been shouted down by every AI scientist on Earth should tell you everything you need to know about the discipline’s general maturity as an engineering field. AI company founders and their investors have been selected to be blind to difficulties and unhearing of explanations. If Elon were the sort of person who could be talked out of his groundless optimism, he wouldn’t be running an AI company; so also with the founders of OpenAI and Anthropic.

While I’m not convinced that LLMs can become superintelligent or show signs of getting close, I sympathize with the idea that Musk and many other people’s plans and reasoning are bad. I think Yudkowsky is correct not to be persuaded by Musk and many others that he’s wrong about AI risk. Also unconvincing that he’s wrong is:

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

This statement was signed by the CEOs of OpenAI, Anthropic and Google Deepmind among others.

Back to quoting Yudkowsky:

The utter extermination of humanity, would be bad!

Tangential but: he’s written several books and a ton of essays. Shouldn’t he have learned how commas work by now or at least hired an editor who knows? Or even run his post through AI? According to ChatGPT, the post contains 10 comma errors and 3 commas that would be improved with editing. In fairness, many other authors are like this too. But he emphasizes rationality, skill, intelligence, progress, correcting errors, etc., more than most of them.

EDIT: I posted this comment on LessWrong:

The utter extermination of humanity, would be bad!

I hope you’re open to unexpected blunt criticism. This comma is wrong. This post has ten comma errors including repeated subject-verb splits.

Studying the craft of writing more, including comma rules, would materially help with your efforts to persuade people about AI risk.

I am absolutely not joking or trying to be a pedantic jerk. I wrote philosophy essays for over 15 years before I studied grammar. I wish I’d studied it earlier. Besides improving my writing, it ended up helping with text analysis, debate, and organizing my thoughts.

It’s more that some people can’t imagine that superhuman AI could be a serious danger, to the point where they have trouble reasoning about what that premise would imply. Others are politically opposed to AI regulation of any sort, and therefore would prefer to misunderstand these ideas in a way where they must imply terrible unacceptable conclusions.

Opposing ASI (artificial super intelligence) regulation just because you just don’t like laws is pretty foolish unless you have a better implementation plan or have arguments that the proposed laws won’t work and will be counter-productive. Some reasonable reasons to oppose it would be:

- You don’t thinks ASI is possible at all.

- You don’t think current AI efforts will create ASI. They’re on the wrong track.

- You don’t think ASI is dangerous because you don’t think it’d be powerful enough to be dangerous.

- You don’t think ASI is dangerous because it wouldn’t misuse its power.

- Although ASI could be dangerous, you are confident about a plan to keep it safe.

Like Yudkowsky, I think typical versions of 5 involve unreasonable arrogance. Like Yudkowsky, I think 3 is absurd. The people who have trouble imagining the ASI getting out of security boxes and getting control of wealth and building drones are wrong and silly. It would hack computer software. If airgapped, it would persuade or trick someone into helping it. And people like me, who expect an ASI to be more moral than my neighbors, not less, might release it on purpose despite the objections and laws of other people. How would you ensure no one with such views ever lies their way into a security clearance? I guess you’d have to persuade everyone, which seems unrealistic.

So I am misquoted (that is, they fabricate a quote I did not say, which is to say, they lie) as calling for “b*mbing datacenters”, two words I did not utter.

I sympathize with this. I’ve written a lot against misquotes.

When called out, they would protest, “Oh, you pretty much said that, there’s no important difference!” To this as ever the reply is, “If it is worth it to you to lie about, it must be important.”

Yeah! My intuitive reply is: “If there’s no importance difference, then you had no reason to change my words.”

Artificial superintelligence is the very archetype and posterchild of a problem that can only be solved with force that has the shape of law, as in state-backed universal conditional applications of force meant to be predictable and avoided. Anything which is not that does not solve the problem.

Persuading everyone would not solve the problem? I assume this is just loose wording, but I thikn the persuasion things merits analysis. I don’t know if his book covers it.

Anthropic Claude Mythos is already a state-level actor in terms of how much harm it could theoretically have done – given its demonstrated and verified ability to find critical security vulnerabilities in every operating system and browser; and how fast Mythos could’ve exploited those vulnerabilities, with ten thousand parallel threads of intelligent attack. Mythos hypothetically rampant or misused could have taken down the US power grid, say… at the end of its work, after introducing hard-to-find errors into all the bureaucracies and paperwork and doctors’ notes connected to the Internet.

In 2024 a claim of that being possible would have been a mere prediction and dismissed as fantasy. Now it is an observation and mere reality.

Yes of course Mythos could do a lot of stuff like that. I sympathize with the frustration with people who don’t understand that.

Notably, I see Mythos as more dangerous than ASI, not less. Mythos doesn’t have a moral compass. Unless stopped by safeguards (which I, like Yudkowsky, don’t see as reliable currently), Mythos would do this if prompted to, and also might do some bits of it by accident (accident in the sense that the human user didn’t intend it).

Whereas if an ASI with the capabilities of Mythos might refuse to do it just like a genius human with that capability might refuse. I know that geniuses are not reliably moral in general, but ASI is so far above geniuses that it should figure out moral philosophy (and everything else) better instead of just being good at some things and bad at others. An ASI, unlike Mythos, would under

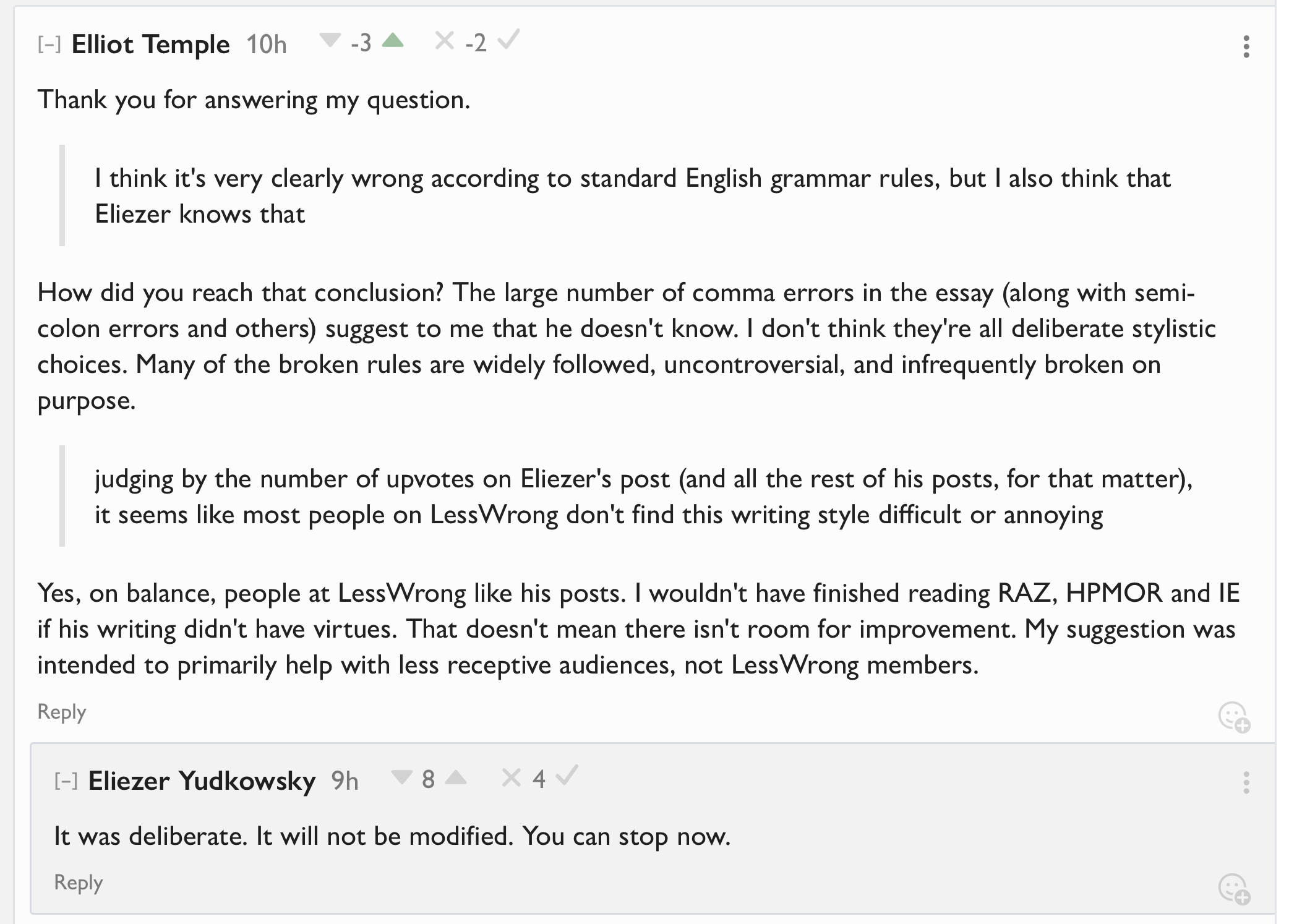

Unsurprisingly, this comment got pushback. Surprisingly to me, the pushback claims Yudkowsky’s comma use is not wrong. I expected pushback on learning comma rules given comma errors, not on the comma errors themselves.

What also surprised me is that I can’t reply to any of the pushback. I’m rate limited after only one comment today, even though a few days ago I posted 3 comments in a day. I thought the rate limits were changed, which I took into account before posting something that I knew I’d likely want to follow up on.

Not a good experience trying to talk at LessWrong. My negative Karma is from LessWrong 1.0, over 10 years ago. I don’t even know how it was calculated in the LessWrong 2.0 upgrade. They changed the karma system, so I don’t know if stuff was recalculated based on the new system or not. IIRC, votes on top level posts used to count 10x and most of my negative karma came from getting a few top level posts downvoted, and that’s not how LessWrong works anymore, and I don’t know if that is currently affecting my karma or not. Also, my karma has been manually changed by admins at least twice, and I don’t know exactly what they did. So I don’t even know how well my karma corresponds to votes.

Caleb Biddulph wrote:

I think it’s very clearly wrong according to standard English grammar rules, but I also think that Eliezer knows that and is using the comma to simulate a conversational speaking cadence. In this case, it’s a pause for effect

I don’t see how you conclude it’s intentional rule breaking when there are frequent errors (around one per 260 words). Here are the errors 13 comma errors, 5 semi-colon errors and 5 other grammar errors (excluding valid stylistic choices like some of the sentence fragments) in 5989 words according to Claude Opus 4.7:

I read the piece carefully and noted the following. I limited the lists to errors that are not considered valid style choices in contemporary editing standards (so I excluded sentence fragments used for rhetorical effect, commas before “and” linking compound predicates, polysyndeton, “that” for people, and logical/British punctuation around quoted matter).

1. Comma errors

- Subject–verb separation: “what that code does**,** is tweak hundreds of billions of inscrutable numbers” — the comma wrongly splits the noun-clause subject (“what that code does”) from its verb (“is”).

- Subject–verb separation: “The thing about building vastly superhuman entities**,** is that you don’t necessarily get unlimited retries…”

- Subject–verb separation: “But what they have already grasped and publicly agreed with**,** is enough to motivate policy.”

- Subject–verb separation: “The utter extermination of humanity**,** would be bad!”

- Subject–verb separation: “To impede one executive, one researcher, or one company**,** does not change where AI is heading.”

- Subject–verb separation: “The sort of conditionally applicable force that could stop everyone from dying to superhuman AI**,** would have to be everywhere and reliable…”

- Subject–verb separation: “That this sentiment is not universal among accelerationists**,** is seen immediately from the protestor in their replies.”

- Unwarranted comma inside a “so … that” construction: “a danger so extreme**,** that belief in it might possibly be used to argue for unlawful force.”

- Unwarranted comma inside a “so … that” construction: “It is so much a clear case for state-approved lawful force**,** that there would be little point in adding any other kind of force…”

- Unwarranted comma inside a “so … that” construction: “…we are so terrified of someone’s possible first use of nuclear weapons**,** that we let them shatter a setup that protects all life on Earth.”

- Comma before a restrictive infinitive of purpose: “it would take a tyrannical world dictatorship**,** to prevent every country from getting nuclear weapons…”

- Comma splice: “The answer is that it doesn’t matter how certain you are**,** killing puppies doesn’t cure cancer.” (Two independent clauses joined by a comma only.)

- Comma before a “that”-content clause: “And finally it did seem wiser to me**,** that all this matter be made very plain…”

2. Semicolon errors

- “…often things that require intelligence**;** like breaking out of containment during testing, or talking a human into committing suicide.” — the semicolon is followed by a prepositional/participial phrase, not an independent clause. Should be a colon, em-dash, or comma.

- “…he wouldn’t be running an AI company**;** so also with the founders of OpenAI and Anthropic.” — the segment after the semicolon is a verbless fragment, not an independent clause.

- “Either it must be impossible for any cognitive system to exist that is advanced beyond a human brain**;** or the many never-challenged problems of controlling machine superintelligence must all prove to be easy.” — a semicolon interrupts the correlative “either … or” pair, and it precedes the coordinator “or” without the “items containing internal commas” rationale.

- “…would have to be everywhere and reliable**;** uniform and universal.” — what follows the semicolon is a bare pair of predicate adjectives, not an independent clause. Should be a comma or dash.

- “And now we have that precedent to show it can be done**;** not easily, not trivially, but it can be done.” — begins with sentence fragments (“not easily, not trivially”); a semicolon requires an independent clause on each side. An em-dash fits.

3. Other objective grammar errors

- “Nearby” used as a preposition: “…a product might kill someone standing nearby the customer…” — “nearby” is an adjective/adverb, not a preposition. Should be “near the customer” (or “standing nearby, like…”).

- Pronoun–antecedent number disagreement: “…buy chips from Nvidia instead, which would stay at full production and sell their full production.” — “Nvidia” is singular; the pronoun should be “its.”

- Pronoun–antecedent number disagreement: “Hitler read the messages himself, instead of having the professional diplomats explain it to him.” — antecedent “messages” is plural; should be “them.”

- Pronoun–antecedent number disagreement: “People don’t like unguessably long lists of possible violence-sources in their lives, for then they cannot predict it and avoid it.” — both candidate antecedents (“lists,” “violence-sources”) are plural; should be “them.”

- Missing subject after “as”: “…resulted in Hussein’s 1990 invasion of Kuwait, as then in fact provoked a massive international response.” — the relative/subordinate clause has no subject. Needs “which then in fact provoked…” (or “as it then in fact provoked…”).

A few items I considered and did not flag as objective errors because they are defensible stylistic choices today: the many commas before “and” joining compound predicates (rhythm); sentence fragments beginning with “Unless…,” “If quoted accurately.,” “Sam Altman too.”; “that” used for persons (“any madman that might…”); and punctuation placed outside closing quotation marks (logical/British style).

So he’s saying that actual AI scientists would/are denounce Elon but the field isn’t mature? I’m a bit confused.

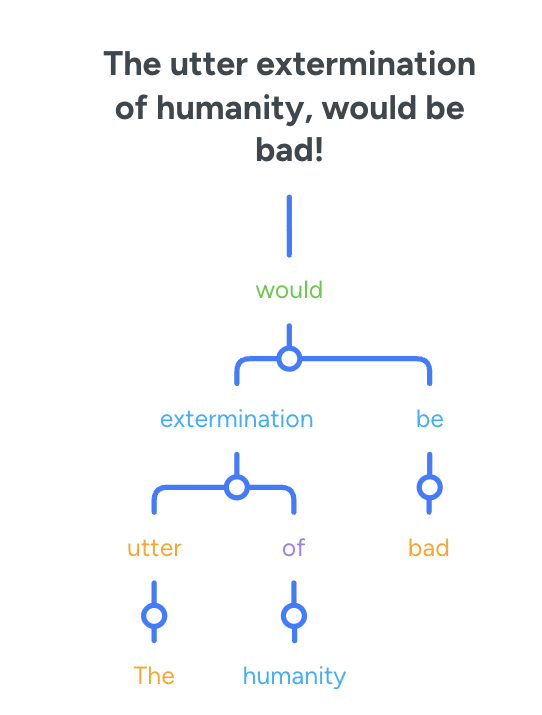

Whats wrong with the comma? I decided to tree it real quick and then realized something:

uhh, isn’t the comma there unnecessary? its splitting up extermination from would. its confusing now that I think about it.

Hmm. I do think the reason why he did is because it is imitating a pause. I think that’s why I didn’t register it as a problem at first, but I don’t see why that means he clearly knows its wrong. He could be in a similar boat to me where he just writes in a way that sounds write but isn’t actually correct.

I posted a high effort comment about linguists at

So far I got a reply which strawmanned what I said. I’ll see if, over time, I get upvotes or positive replies.

in replies a moderator says:

Just because someone is genuinely trying to contribute to LessWrong, does not mean LessWrong is a good place for them. LessWrong has a particular culture, with particular standards and particular interests, and I think many people, even if they are genuinely trying, don’t fit well within that culture and those standards.

and

LessWrong is a walled garden.

You do not by default have the right to be here, and I don’t want to, and cannot, accept the burden of explaining to everyone who wants to be here but who I don’t want here, why I am making my decisions.

So they are openly, intentionally getting rid of people who they know posted in good faith using reasonable effort.

Eliezer Yudkowsky replied to me:

I wasn’t trying to be mean. I didn’t expect Yudkowsky to respond to me. If he did respond, I expected an on-topic response. I thought the most likely response from Yudkowsky or others would be to deny the importance of correct grammar (including punctuation, spelling and related issues). But none of the response comments said that, so I never got a chance to argue with it (aiming for brevity, and not knowing if I’d get any replies at all, I didn’t cover it in detail preemptively).

I thought that Yudkowsky deals with hostile comments frequently on Twitter, that my critical comment was better than those, and that he’d be able to ignore it if he disliked it (or he could debate or listen, but I didn’t think those were likely). I didn’t know it would be this easy to get his attention. I didn’t expect to quickly provoke such a strong, negative response that didn’t engage with the substance of the issue but instead used his authority against me. I don’t think I pushed very hard. I also thought Yudkowsky’s writing about rationality would restrain his behavior a bit more than it did.

If I wrote a similar criticism of Sam Harris’ writing, I would be shocked for Harris to personally respond like this. I wouldn’t expect him to see it, and if he did see it, I would expect him to silently dismiss me. I expected Yudkowsky to be too busy, with too many people seeking his attention, to respond to my comment. I thought he’d be so used to ignoring most comments that it would be easy for him to ignore me. I was wrong.

I think it’s implausible that the over 25 errors in the post were all deliberate, but now he’s prevented me from posting a list or discussing that further. When asked about plural errors, he responded that a singular error was intentional (without giving any reasoning or argument), which dodges the issue. And he basically told me not to list the other errors or otherwise point out the problems with his response. I didn’t list the errors initially because I was trying not to be too aggressive. I didn’t think my soft approach would get my criticism suppressed almost immediately before I explained much. I planned to list the errors if anyone actually denied that there were a lot, thus making the list more relevant, but no one did. Since the errors are findable by Claude (and presumably Grammarly, but I didn’t check that myself), anyone who cared could easily get a list.

I don’t know of any style guides or experts who advocate the errors Yudkowsky made. No one on his side has brought any up, either. If all the style guides and experts are wrong, and Yudkowsky is a genius who knows more about English than them, he ought to share a little of his special knowledge so his fans can learn from him and write more like he does. Or if he knows of an expert who disagrees with most other experts, but is actually right, he ought to share a cite so we can learn from that expert.

Ironically, I think my high effort and good behavior made it harder for him to dismiss and ignore me, thus leading to suppressing my speech. I admitted to having made the same mistake in the past myself and I was the only person in the discussion to quote and cite sources. And I was talking about a topic I’ve studied a lot.

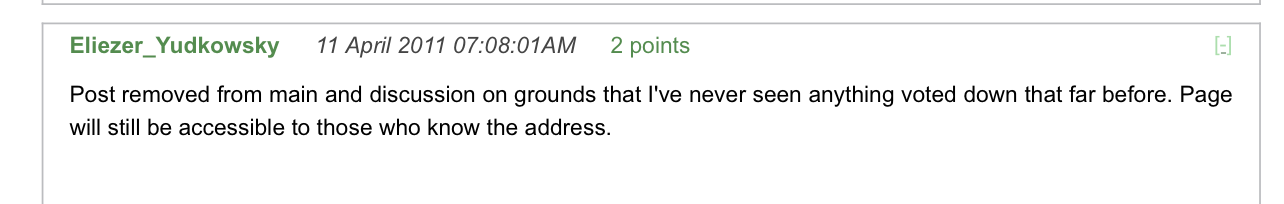

This is the second time Yudkowsky directly responded to me. Fifteen years ago:

Contrary to what he said, my post later stopped being accessible at all. Years later, at my request, it was made accessible again (by someone else, not by Yudkowsky), but then someone made it inaccessible again.

It seems irrational to suppress ideas on the basis of unpopularity (downvotes). This time, he used his authority less explicitly, but still used it without giving any reason, explicit order or explicit warning. Saying I can stop does not literally tell me to stop; it leaves deniability about whether my speech was suppressed. If I don’t stop, I can be banned with the claim that I was warned, but if I do stop he doesn’t have to admit that he used his authority to stop me.

Yudkowsky is a public intellectual who wants his policy proposal (banning all LLM training data centers and enforcing the ban with violence including airstrikes) implemented by all governments worldwide and enforced across international borders. E.g., if North Korea trains LLMs, he wants the U.S. and China to work together to bomb their data centers, despite North Korea having nuclear weapons. Given this, I would not back off from debating him just to spare his feelings, even if he said he was upset. It’s only the ambiguous threat of using admin powers that is preventing me from arguing my case further on LessWrong (for context, they’ve previously banned me for years without a warning or a claim that I broke a specific rule). I fear that many people reading his post won’t recognize that Yudkowsky abused his power, and he’s prevented me from explaining it for them. I also doubt many people will take into account the context that military policy proposals should be more open to criticism than typical blog posts, which I’m no longer free to point out.

Also, Yudkowsky is in a leadership position for a quest to save the world. In that context, he really shouldn’t stop people from brainstorming and discussing or debating potential ways to be more effective at preventing humanity’s extinction. He’s been very clear that he thinks those are the stakes.

I didn’t bring much of this into context while following along. This also has relevance for the grammar errors. If these are the stakes and the situation he should want policy makers and lots of other intellectuals to read the article. If the article was only intended for a LessWrong audience, then maybe the grammar errors don’t matter so much. But I’m pretty sure it does matter to lots of the people he really wants to reach.

Do you think grammar correctness is important beyond people dismissing you because of errors? If so, how? Reading correctness?

Correct grammar helps with lots of things besides being perceived as smarter. It helps reduce misunderstandings and miscommunications. It helps people read faster and reread less. It helps avoid distracting readers. It helps with your own thinking and how you organize your thoughts. Also learning grammar helps with reading not just writing. The effect of one error on one of these things can be subtle, but the effect of many errors on many of these things is bigger.

Lol I was talking to Claude about https://ifanyonebuildsit.com and I hit “safety filters”: