Because Apple uses unified RAM instead of separate vram. If you get a 64gb mac studio, that’s like having over 50gb of vram available if needed which is way more than regular graphics cards. Like an expensive RTX 5080 has 16gb vram and most cards have less. You can easily get a mac with 16gb of unified ram for less than a 5080 costs.

Yeah. I don’t think it’s quite as performant as direct VRAM but it’s way more accessible. I wouldn’t be surprised if in 5-10 years all PCs use unified memory.

It’s also worth noting that memory has historically been pretty cheap for consumer GPUs. Like here’s an article from 9 mo ago that cites $10/chip for 3GB GDDR7 chips:

Prices are a lot higher now, but I think this is curious becuase nvidia has been stingy on memory for a long time. Would we have these issues if nvidia had just shipped consumer cards with more memory earlier? I heard (based on tech specs and gpu board analysis) that the 3070 was going to have 16GB but shipped with 8GB instead (and memory was still cheap then). The GTX 1080 and 1080 are 10 years old now and shipped with 8GB and 11GB vram. Maybe there’s a good reason (like gpu die complexity) that they couldn’t, but definitely seems like some of nvidia’s anticonsumer practices have contributed to some negative side effects. But also maybe I’m just interpreting market decisions with the benefit of hindsight and shouldn’t make this kind of conclusion.

That said, one thing I neglected to consider is that the bottleneck is different for different architectures (and if you include like batch sizes and stuff). So being slightly less efficient for particular architectures might not matter much if we get more and different architectures. We already have a decent collection (transformers of different kinds, convolutional layers, new techniques with attention and engrams, and there’s stuff IDK as much about like LSTM and what performance matters for training vs execution/inference).

Gemma 4 (31b) was unusable with 64gb ram by default but ran ok with a smaller context window. Still took a couple minutes to answer one question.

gemma4 31b with 8k context

MacStudio[9:54] curius > ollama show gemma4:31b

Model

architecture gemma4

parameters 31.3B

context length 262144

embedding length 5376

quantization Q4_K_M

requires 0.20.0

Capabilities

completion

vision

tools

thinking

Parameters

temperature 1

top_k 64

top_p 0.95

License

Apache License

Version 2.0, January 2004

…

MacStudio[9:57] curius > cat << ‘EOF’ > Modelfile

FROM gemma4:31b

PARAMETER num_ctx 8192

EOF

ollama create gemma4-8k -f Modelfile

ollama run gemma4-8k

gathering model components

using existing layer sha256:280af6832eca23cb322c4dcc65edfea98a21b8f8ab07dc7553bd6f7e6e7a3313

using existing layer sha256:7339fa418c9ad3e8e12e74ad0fd26a9cc4be8703f9c110728a992b193be85cb2

creating new layer sha256:2924f9c640a2070b2a20ef7280844f11428d3259a607fc94b61bb39de13ef9f4

writing manifest

success

explain qualitative vs quantitative

Thinking…

Qualitative vs. Quantitative.

Explain the differences clearly and comprehensively.

* *Quantitative:* Numbers, statistics, measurable, objective, "How many/much?"

* *Qualitative:* Words, descriptions, meanings, subjective, "Why/How?"

A good explanation should be structured logically:

* **High-level definitions** (The "elevator pitch").

* **Detailed breakdown** (Comparison table or bullet points).

* **Examples** (To make it concrete).

* **Pros and Cons** (When to use which).

* **Relationship** (How they work together—Mixed Methods).

* *Quantitative* = Quantity (numbers).

* *Qualitative* = Quality (characteristics).

* *Quantitative:* Goal is to test hypotheses, find patterns, and generalize results to a larger population. It's about *breadth*.

* *Qualitative:* Goal is to understand concepts, experiences, and motivations. It's about *depth*.

* *Quantitative:* Surveys (multiple choice), polls, sensors, experiments, analytics.

* *Qualitative:* Interviews, focus groups, open-ended questions, case studies, observation.

* *Quantitative:* Numerical, stats, graphs, charts.

* *Qualitative:* Text, audio, video, themes, narratives.

* *Quantitative:* "On a scale of 1-10, how happy are you?" $\rightarrow$ "7."

* *Qualitative:* "Tell me why you feel happy today." $\rightarrow$ "I feel happy because I spent time with my family."

* *Quantitative:* Fast, objective, scalable, but lacks context ("The what").

* *Qualitative:* Rich detail, nuanced, but time-consuming and subjective ("The why").

* Mention "Mixed Methods." Explain that the best research often uses both (e.g., a survey to find a trend, then interviews to understand it).

…done thinking.

The simplest way to remember the difference is: Quantitative is about numbers, and Qualitative is about words.

Here is a detailed breakdown of how they differ in approach, method, and purpose.

1. Quantitative (The “What” and “How Many”)

Quantitative research focuses on gathering hard data that can be measured and converted into statistics. It is used to prove or disprove a hypothesis and to

find patterns across a large group of people.

- Goal: To quantify a problem, identify averages, and generalize results from a sample to a larger population.

- Data Type: Numbers, percentages, measurements, and statistics.

- Questions it asks:

- “How many?”

- “How often?”

- “To what extent?”

- “What is the relationship between X and Y?”

- Common Methods:

- Surveys/Polls with multiple-choice questions.

- Experiments (e.g., Clinical trials).

- Systematic Observations (e.g., counting how many cars pass through a light).

- Analytics (e.g., Website traffic, sales figures).

Example: A coffee shop wants to know if their new latte is popular. They track that 75% of customers ordered the latte and the average rating was 4.2

out of 5 stars.

2. Qualitative (The “Why” and “How”)

Qualitative research focuses on understanding human experience, emotions, and motivations. It is exploratory and looks for depth and nuance rather than broad

statistics.

- Goal: To gain a deep understanding of a concept, a social phenomenon, or a personal experience.

- Data Type: Text, audio, video, images, and detailed descriptions.

- Questions it asks:

- “Why?”

- “How did that make you feel?”

- “What was your experience like?”

- “What do you think about X?”

- Common Methods:

- One-on-one interviews (Open-ended questions).

- Focus groups (Group discussions).

- Case studies (In-depth look at one person or company).

- Ethnography (Living within a community to observe culture).

Example: The same coffee shop wants to know why people like the latte. They interview five customers and find that people love it because it “reminds

them of childhood” and has a “creamy texture that feels luxurious.”

Comparison Summary Table

| Feature | Quantitative | Qualitative |

|---|---|---|

| Focus | Testing hypotheses / Breadth | Exploring ideas / Depth |

| Data | Numbers & Stats | Words & Meanings |

| Sample Size | Large (Representative) | Small (Specific) |

| Approach | Objective / Structured | Subjective / Unstructured |

| Analysis | Statistical analysis | Thematic analysis (finding patterns) |

| Result | Precise, numerical “Truth” | Nuanced, descriptive “Insight” |

Which one should you use?

It depends on your goal. Often, the best approach is Mixed Methods, where you use both to get the full picture.

- Use Quantitative if: You need to confirm a trend, prove a point with data, or see if a change had a statistically significant impact.

- Use Qualitative if: You are in the early stages of a project, you don’t know what the problem is yet, or you want to understand the “human” side of a

story.

The “Mixed Methods” Workflow:

- Qualitative \rightarrow Quantitative: You interview people to find a problem \rightarrow you create a survey to see how many other people have that

same problem. - Quantitative \rightarrow Qualitative: You see a drop in sales in your data \rightarrow you interview customers to find out why they stopped buying.

Send a message (/? for help)

more local Gemma 4

What does it take for arguments and evidence to be conclusive?

edit

Thought for 68.4 seconds

Conclusiveness of arguments and evidence. Explain the criteria or conditions under which an argument or piece of evidence is considered “conclusive.”

* *What does "conclusive" mean?* It means putting an end to doubt; decisive; final. It leaves no room for reasonable alternative explanations.

* *Contexts:* Law (beyond reasonable doubt/preponderance), Science (empirical proof/reproducibility), Logic (deductive validity/soundness), Philosophy (cogency).

* **Category 1: Logical Structure (The "Argument" part)**

* Deduction vs. Induction.

* Validity (structure) and Soundness (truth of premises).

* Avoiding fallacies.

* **Category 2: Quality of Evidence (The "Evidence" part)**

* Reliability (consistency).

* Validity (measuring what it's supposed to).

* Sufficiency (amount/coverage).

* Direct vs. Indirect (circumstantial).

* **Category 3: The Relationship between Evidence and Conclusion (The "Connection")**

* Causality vs. Correlation.

* Exclusivity (ruling out alternatives).

* Coherence (fitting into a larger framework).

* **Category 4: Contextual Standards (The "Threshold")**

* Legal standards (Reasonable doubt).

* Scientific standards (P-values, Peer review).

* Mathematical standards (Formal proof).

* *Introduction:* Define conclusiveness. Acknowledge that it varies by field.

* *Section 1: Logical Soundness (The Framework):*

* Focus on *Deductive Soundness*. If premises are true and the logic is valid, the conclusion *must* be true. This is the gold standard for conclusiveness.

* *Section 2: Evidentiary Strength (The Raw Material):*

* Discuss *reliability* and *verifiability*.

* Mention *convergence* (multiple independent sources pointing to the same result).

* *Section 3: The "Elimination of Alternatives" (The Key):*

* This is crucial. To be conclusive, you can't just have one good theory; you have to show that other theories are impossible or highly improbable. (Occam's Razor, but more about falsification).

* *Section 4: Contextual Thresholds (The Bar):*

* Contrast Math (100%) with Science (High probability) with Law (Reasonable doubt).

* *Conclusion:* Summary statement. Conclusiveness is often an asymptotic goal rather than a permanent state (especially in science).

To say that an argument or a piece of evidence is conclusive means that it settles a question definitively, leaving no reasonable room for doubt or alternative interpretations.

In reality, “conclusiveness” varies depending on the field (mathematics, law, science, or philosophy). However, across all disciplines, for an argument to be considered conclusive, it generally must satisfy four primary dimensions: logical validity, evidentiary sufficiency, the elimination of alternatives, and contextual coherence.

1. Logical Soundness (The Structure)

Evidence alone is not an argument; it is raw data. For evidence to become a conclusive argument, it must be wrapped in a logically sound structure.

- Validity: An argument is valid if the conclusion logically follows from the premises. If the premises are true, the conclusion must be true.

- Soundness: An argument is sound if it is valid and its premises are actually true in the real world.

- Avoidance of Fallacies: A conclusive argument must be free of logical leaps (non sequiturs), circular reasoning, or straw man arguments. If a flaw in logic is found, the conclusion is no longer inevitable, and therefore not conclusive.

2. Evidentiary Strength (The Raw Material)

For evidence to be conclusive, it cannot be anecdotal or cherry-picked. It must possess several key qualities:

- Reliability: The source of the evidence must be dependable. In science, this means reproducible results; in law, this means a credible witness or a verified forensic trail.

- Sufficiency: There must be enough evidence to cover all necessary points of the claim. A single fingerprint at a crime scene is evidence, but it is rarely conclusiveevidence of guilt because it doesn’t explain how or why the person was there.

- Directness: Direct evidence (e.g., a video of the event) is more likely to be conclusive than circumstantial evidence (e.g., the person was seen walking toward the event). However, a “convergence” of multiple circumstantial pieces of evidence can eventually become conclusive.

3. The Elimination of Alternative Explanations

This is the most critical hurdle for conclusiveness. An argument is not conclusive if there is a plausible alternative explanation for the evidence.

- The “Rule-Out” Process: To be conclusive, you must not only prove that your theory could be true, but that other competing theories are impossible or highly improbable.

- Falsification: In science, a conclusion becomes more robust when you have attempted to prove it wrong and failed.

- Exclusivity: If Evidence A supports Theory X, but also supports Theory Y, then Evidence A is suggestive, not conclusive. It only becomes conclusive when you provide Evidence B, which supports X but contradicts Y.

4. Contextual Thresholds (The “Bar”)

What counts as “conclusive” depends entirely on the burden of proof required by the specific field:

- Mathematics/Logic: The bar is absolute. A proof is conclusive if it is derived from axioms via laws of inference. There is no “probability” here; it is 100% certain.

- Criminal Law: The bar is “Beyond a Reasonable Doubt.” This doesn’t require absolute mathematical certainty, but it requires that no other reasonable explanation exists that would lead to a verdict of not guilty.

- Civil Law: The bar is “Preponderance of Evidence” (more likely than not). Here, evidence is “conclusive” if it simply tips the scales to 51%.

- Science: Science rarely claims “absolute” conclusiveness because it is always open to new data. Instead, it looks for statistical significance (p-values) and consensus. A theory becomes “conclusive” for practical purposes when the evidence is so overwhelming that it would be irrational to deny it (e.g., the Theory of Gravity).

Summary Checklist

If you are evaluating whether an argument is conclusive, ask these four questions:

- Does the conclusion actually follow from the premises? (Logic)

- Is the evidence verifiable, reliable, and sufficient? (Quality)

- Have all other plausible explanations been ruled out? (Exclusivity)

- Does this meet the required burden of proof for this specific field? (Threshold)

If the answer to any of these is “No,” the argument may be persuasive or probable, but it is not conclusive.

After it’s conclusive, can it be revisited later, questioned or doubted?

edit

Thought for 68.8 seconds

Can something that has been deemed “conclusive” be revisited, questioned, or doubted later? The concept of “conclusiveness” and its permanence/stability over time.

* *Is "conclusive" forever?* In mathematics, yes (mostly). In science, no. In law, sometimes (res judicata), but appeals exist. In philosophy, almost never.

* *Why would it be revisited?* New evidence, new tools for analysis, new logical frameworks, shifts in cultural/social paradigms.

* *The difference between "Conclusive given current data" vs. "Absolute Truth."*

A good answer should break down the response by the domains mentioned in the previous turn, as the rules change based on the field.

* **Introduction:** Yes, it can. "Conclusive" is often a status relative to a specific point in time and a specific body of knowledge.

* **Science (The most dynamic field):**

* Concept: Provisional truth.

* Example: Newtonian physics $\rightarrow$ Einsteinian physics.

* Mechanism: New data, better technology (telescopes, microscopes), paradigm shifts (Kuhn).

* **Law (The most rigid/procedural field):**

* Concept: Finality vs. Justice.

* Mechanism: New evidence (DNA), appeals, exonerations.

* Terms: "Res judicata" (the thing has been judged) vs. "New evidence."

* **Mathematics/Logic (The most stable field):**

* Concept: Absolute truth.

* Nuance: It stays conclusive *unless* the axioms are changed or a flaw is found in the proof (which is rare but happens).

* **History/Philosophy (The most interpretive fields):**

* Concept: Revisionism.

* Mechanism: Discovery of new archives, changing social lenses.

* **Philosophical/Epistemological layer:**

* Distinguish between *Epistemic Certainty* (I know it's true) and *Practical Conclusiveness* (We agree to act as if it's true).

* *Correction:* I should make sure not to say science is "guessing." Science is the most rigorous, but it is designed to be self-correcting.

* *Nuance:* Mention that questioning "conclusive" evidence is actually a virtue in intellectual pursuit (skepticism).

The short answer is yes. In almost every field of human inquiry, something that is labeled “conclusive” today can be revisited, questioned, or overturned tomorrow.

The reason for this is the distinction between Absolute Truth and Epistemic Certainty. Absolute Truth is how the universe actually is; Epistemic Certainty is the highest level of confidence we can have given the information we currently possess.

When we call something “conclusive,” we are usually saying, “Based on all available evidence and our current understanding of logic, there is no reason to believe anything else.” However, “available evidence” and “current understanding” are both subject to change.

Here is how “conclusive” findings are revisited across different disciplines:

1. In Science: The Principle of Provisionality

Science is designed to be self-correcting. No scientific conclusion is ever “final” in a sacred sense; it is simply the best explanation we have until a better one arrives.

- New Data: A telescope that can see further or a microscope that can see smaller may reveal data that contradicts a previous “conclusive” theory.

- Paradigm Shifts: Sometimes the evidence doesn’t change, but our framework for interpreting it does. For example, Newtonian physics was considered conclusive for centuries until Einstein’s Theory of Relativity showed that Newton’s laws were actually just approximations that failed at very high speeds or near massive gravity.

- The “Anomalous Result”: Science progresses when someone notices a tiny piece of evidence that the “conclusive” theory cannot explain. That tiny anomaly is the seed of the next revolution.

2. In Law: The Tension Between Finality and Justice

The legal system struggles with this. Law values finality (the idea that a case must eventually end so people can move on), but it also values justice (the idea that the truth matters).

- New Evidence: In criminal law, a “conclusive” conviction can be overturned if new evidence emerges (e.g., DNA testing that didn’t exist at the time of the trial).

- Appeals: A higher court may determine that the lower court’s “conclusive” reasoning was logically flawed or that they misapplied the law.

- Legal Precedent: Laws change. What was a “conclusive” legal interpretation in 1920 may be viewed as unconstitutional or obsolete in 2024.

3. In Mathematics and Logic: The Highest Stability

Mathematics is the only field where “conclusive” is closest to “eternal.” If a mathematical proof is logically sound and based on accepted axioms, it is conclusive forever.

- However, even here, things can be revisited. A proof can be found to have a subtle error that went unnoticed for years.

- Furthermore, mathematicians can change the axioms (the starting assumptions). For example, for centuries, Euclidean geometry was “conclusive.” Then, mathematicians wondered, “What happens if we change the rule about parallel lines?” This led to non-Euclidean geometry, which is essential for understanding the curvature of the universe.

4. In History: The Process of Revisionism

History is rarely “conclusive” because the “evidence” (archives, diaries, artifacts) is often incomplete or biased.

- Discovery of New Sources: The discovery of a lost cache of letters or a new archaeological site can instantly turn a “conclusive” historical narrative on its head.

- Changing Perspectives: Later generations often ask different questions of the past. A “conclusive” account written by the victors of a war may be revisited centuries later to include the perspectives of the defeated or the marginalized.

Summary: Why it is healthy to revisit the conclusive

If we treated “conclusive” as “unquestionable,” progress would stop. The history of human knowledge is essentially a graveyard of “conclusive” arguments that were later proven wrong:

- It was once “conclusive” that the Earth was the center of the universe.

- It was once “conclusive” that miasma (bad air) caused cholera.

- It was once “conclusive” that the atom was the smallest possible unit of matter.

The hallmark of a rigorous mind is the ability to accept a conclusion as “conclusive” for now, while remaining open to the possibility that new evidence may demand a change in belief later.

I think the “Thought for” time is just the thinking time before answering (the bullet point sections and above), not the total time.

Here is another good example of issues you may run into with security:

https://www.reddit.com/r/healthIT/comments/1s1vm3v/i_built_an_opensource_connector_between_mychart/

The original post has been deleted but the comments summarize a lot of the reasons the OP deleted it, and you can still find lots of discussion and a git repository if you scroll through the comments.

Lots of the red flags you mention show up. For example, having OpenClaw read a user’s entire email to find 2FA codes. This is both questionable in the sense that you are giving unfettered access to your email and in the sense that it is kinda violating the entire purpose of MFA codes to begin with.

Disclaimer: I read lots of comments about this project (on that reddit post and elsewhere, where I first heard about it) but I did not look through the github page to verify if the criticisms were all true. It is possible people misrepresented how bad it is.

Yeah, I did, but didn’t look much into it. Only just now noting that the github project is called openrecord of all things. ![]()

One thing I’ve noticed about a lot of AI agent stuff is the lack of caching. It’s not just things you’d search the web for, but stuff like searching all emails every time as you mention. Some of the time that’s fine, but it doesn’t work well for multi-agent systems, nor anything where citations matter, nor health records. In this case the obvious alternative (to me) is like a ‘download all’ type button as we have for social media. Then you just have a zip file with all your stuff in it, and you can run AI over that without spending more (tokens/energy) on email search and browser automation than actually analyzing the results.

Which reminds me, I started vibing a http caching proxy mcp a week or three ago and I forgot where I’ve put it.

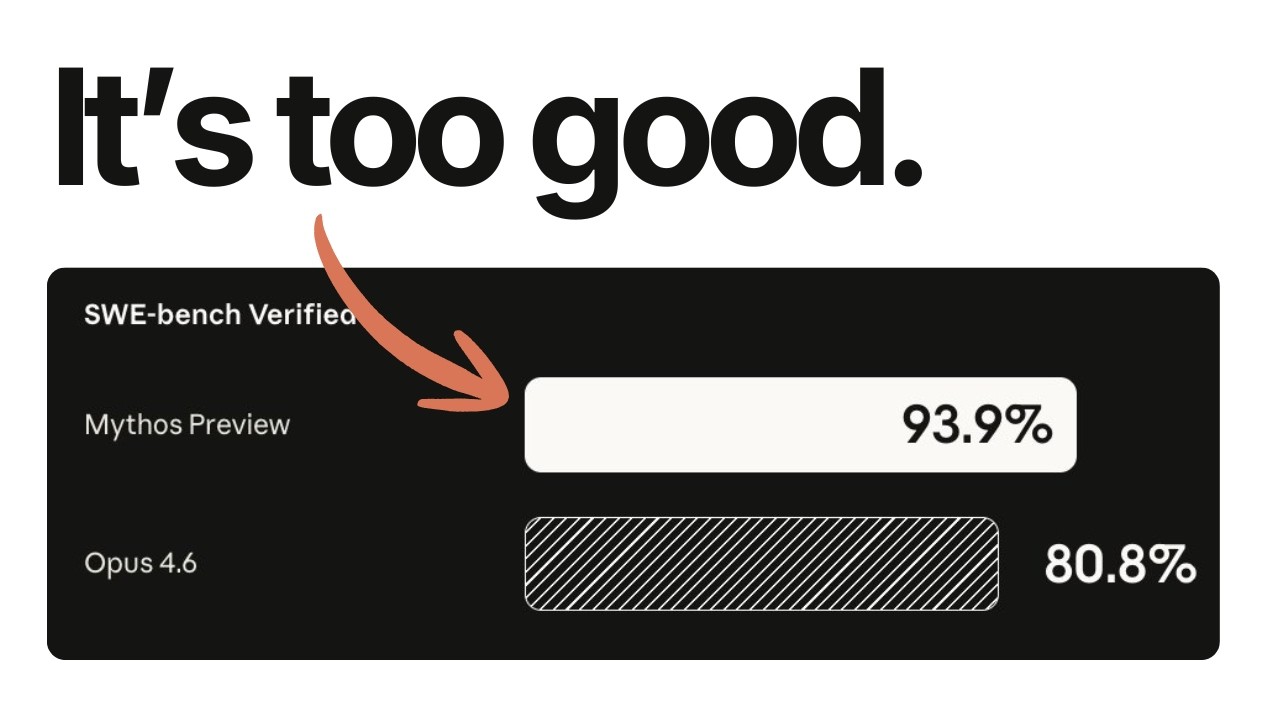

Do you have any thoughts about Anthropic’s business practices? I was thinking about posting something yesterday after I saw the tweet and t3 vid, but I’m not sure where I’d put it (policing isn’t really appropriate, but Anthropic does seem somewhat anti-consumer). Some of their moves seem savvy and some seem like they’d come from Apple on a bad day.

Anthropic seems not great but I don’t know a lot.

You can put stuff anywhere it’s loosely relevant or make a new topic. Don’t stress about it.

Apparently the new unreleased Claude is super good at finding and exploiting cybersecurity issues.

this pattern is way too common:

- agent: I can rethread the spongulator if you like?

- me: yeah great thanks

- agent: You’re welcome.

- me: do it now.

- <agent actually goes and does it>

I really hope AI gets better at responding well to positivity because other we’re all going to become curt assholes.

yeah I get that sometimes. i write a fair amount of messages like “do it”, “continue”, etc

it goes the other way too though. i ask “explain that and suggest how you would fix it” and then it goes ahead and fixes it. often i have to be pretty direct and specific like say “do not edit anything” in addition to asking questions if i want to decide before it does stuff. it’s generally good at undoing what it just did though and it doesn’t get annoyed about that like a person might.

or I say “would X be a good idea?” and it just goes and does X.

yeah. it seems like either you set it (any SOTA model atm) up with a single prompt and let it run (and hope it finishes properly), or steer it and accept it’ll start asking for your opinion on boring stuff too.

I’ve found a detailed and instructively written skill about an approach can help, but still it’s not great.

My current thinking is that it’s better to use a meta-harness that has a simple goal driven loop. So basically a python script like:

while run_codex(project_dir, check_goal_prompt) != "ALL_DONE":

run_codex(project_dir, iterate_prompt)

That at least can handle fallibility like random stoppages way better. you can add some git worktrees in there and now you have the self improving AI loop thing everyone went nuts for.

Yeah I get this too. I feel like we need a tone or mood setting or something – or like a slider for proactivity/initiative. That can be done with agent templates (which are placed pretty early in the context) but it invalidates the cache (well the prior cache is still valid but it means you have less than 5 min to get back to the original prompt or resuming will cost 2-10x (I think this applies to most subs too).

hmm. thinking out loud: are any of the AI benchmarks looking at failure modes? They all seem to be how much it does, but is there any test that fails an agent for taking like 4 hours to fix a simple css bug? Maybe this is part of why AIs keep getting better, we are allowing them to brute force solutions on benchmarks. We don’t treat people like this: exams and tests (eg iq test) are all time limited. What ‘time’ means for a model is a bit different and nonstandard, but it’s common for people to get time limited rather than ability limited on tests. (at least from what I remember of school).

edit: oh great replying on discourse stops a video if you’re watching it.

13 posts were split to a new topic: AGI, LLMs, CR and CF

even the regular opus is quite good