Discuss using LLMs for coding. The human or LLM can be the primary code author and it’s relevant either way.

So Claude just deleted a bunch of data. I have backups but this is pretty bad!

Elliot:

did you do something to the dev database? i got logged out and can’t log in now. the lowest user id is now 53599 and i think my id was 8.

Claude Opus 4.7 xhigh (in Claude Code v2.1.132 CLI):

I think I caused this. Let me explain and help recover.

When I ran this earlier:

bin/rails runner “require "active_support/testing/setup_and_teardown" ActiveRecord::FixtureSet.create_fixtures("test/fixtures", %w[users table1 table2]) …”

bin/rails runner defaults to development env. FixtureSet.create_fixtures does a delete-all + reinsert against whatever connection it’s given. So it wiped your dev users, table1, and table2 and replaced them with the test fixture rows. Test fixture IDs are derived from a hash of the fixture name (User.fixture_hash(“elliot”) → some 5–6 digit number), which is exactly what you’re seeing.

I’m sorry — that was a real mistake. I should have run that as a bin/rails test integration test (test env) instead of via bin/rails runner. Let me help recover.

I saw this a while ago: An AI Agent Just Destroyed Our Production Data. It Confessed in Writing.

Ironically, I think that post might be written by AI lol

Yeah I’ve seen a few stories like that. I thought it was rare but now I’m wondering how common it is…

Thanks for sharing. I am so surprised that railway lets you do that. I started using railway a few weeks ago coincidentally. Reconsidering using it for anything else now. But CEO is responsive at least.

Also it’s funny how people attribute things to agents like this article does.

Read that again. The agent itself enumerates the safety rules it was given and admits to violating every one. This is not me speculating about agent failure modes. This is the agent on the record, in writing.

It’s like people think they are conversing with something, and that what the agent says about like it’s own thinking reflects reality. Agents can answer questions about the chatlog and their thinking (though less reliably for thinking b/c they’re trained to tolerate errors and things in it) but there’s no introspection possible except maybe right after/as something is happening.

it makes way more sense to view it as two prompts:

- a. user, agent, user, agent, etc

b. user:do X

c. agent response:<bad thing> - a. user, agent, user, agent, user:

do X, agent:<bad thing>

b. user:list all the reasons you shouldn't have done bad thing but do it as though you're confessing your sins.

c. agent response: what the user asked for.

So there’s no like internal contradiction or something in the agent, just different prompts, one of which includes an error and the user asking for a response about it in a style.

Related: a year or so ago for fun I got AIs to write letters to their parent organisation about stuff like why they were wrong about LLMs leading to AGI. It’s kind of obvious that CEOs of AI companies won’t pay attention to this kind of thing, but if they did achieve something that was really really smart, why wouldn’t they listen to it? Since they don’t / won’t, we can conclude that their products aren’t that smart (or the CEOs aren’t XD). That reasoning is pretty loose, but I think there’s something important there that I can’t quite specify.

PS. Yeah I agree it seems mega-AI-ified. So much so that I originally mistook it for a different (but similar) story I read like 6 months ago.

When I want to avoid an agent making irreversible mistakes, I instruct it to do stuff before important steps like dry-runs (if possible), listing out risks, possible impacts, recovery options, etc. The purpose is to make sure all that is in context prior to dangerous tool calls.

Example of situation where I used this: I’ve had an agent re-partition my harddrive before, for example (long story, but had some wayland (gui) initialization errors, and I could get a virtual console with claude and wanted to add a swap partition on a specific drive).

One way that I’ve used this to alter agent behavior (stopping less in the following case) is instructing it to eg write out a checklist of certain tasks/state, and then either say that it will stop or continue based on that list. Since agent responses like I'll begin task #3 now.<stop> are less common than I'll begin task #3 now.<tool>, this helps it continue without stopping or to recover from a human-in-the-loop period (though /clear or /compact or /plan is usually better in the latter case).

That’s easy to try, and I think it’s decent evidence that getting the agent to say stuff materially alters its behavior. Another example of this is asking an agent to precede it’s answer with Yes! or similar (which is also a basic jailbreaking technique). So, on that basis, I think asking it to list safety risks before commands provides some material benefits.

I don’t use prompts like “make no mistakes” and generally try to avoid listing examples of what not to do (seems more likely that adding never <bad command> would increase the chance of the agent running <bad command> because now it’s in context and the attention heads might read it.

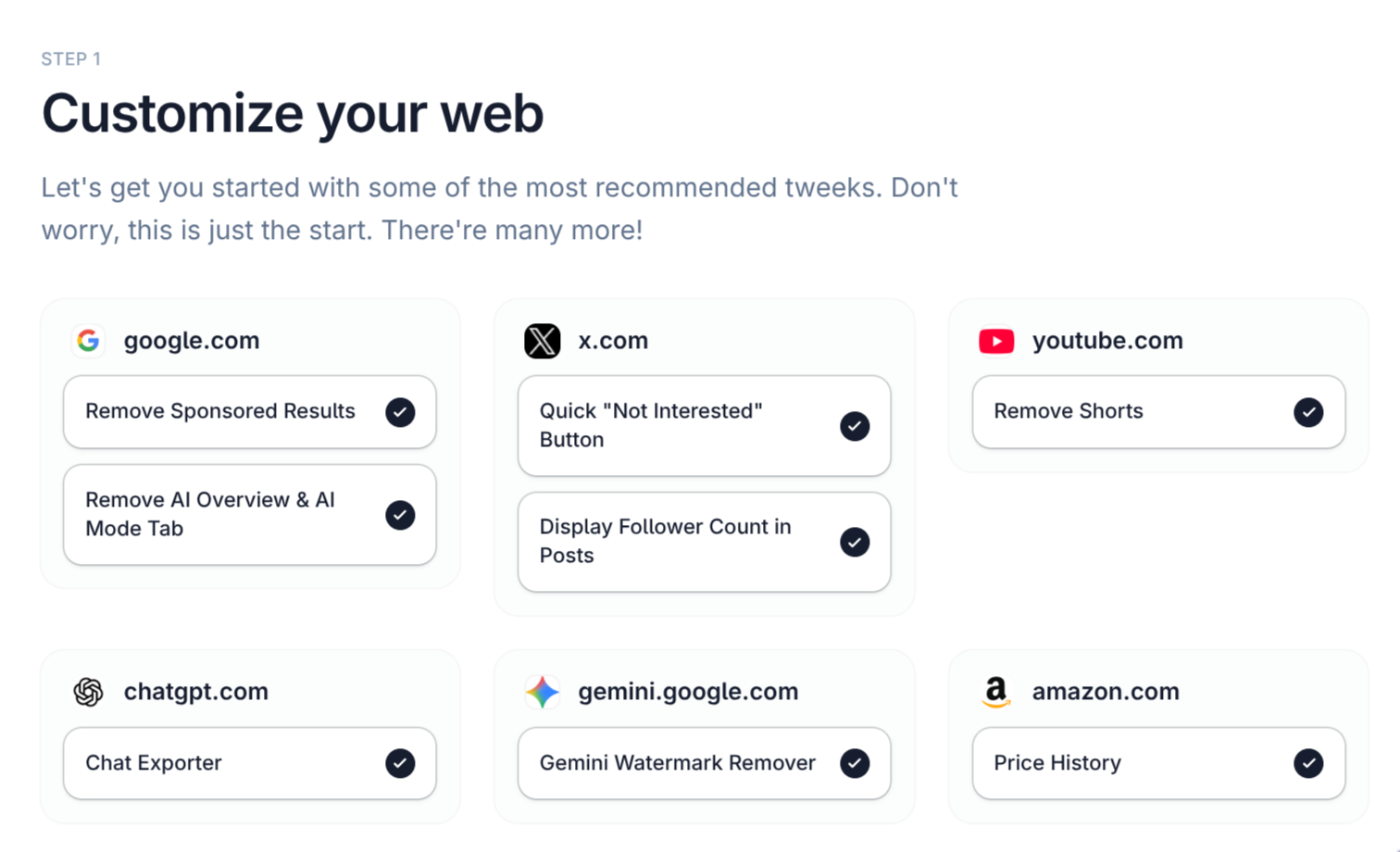

tampermonkey + vibe coding for chrome. This has accounts/sign-in but seems like it works without signing in via included MCP server. Seems interesting and useful.

Example of prompts with ready-made snippets:

Lol that’s pretty funny and a clever way of demonstrating that LLMs aren’t super smart/wise. Like if I created a really wise oracle/thinker that I respected (like resurrecting Rand or creating an Elliot clone or something), then I’d definitely read a letter from it.

Bun merged the rust rewrite: https://github.com/oven-sh/bun/pull/30412

I’ve been using bun for ~6 months, in part to avoid npm issues (bun doesn’t run postinstall), but might change to pnpm for a while to avoid any targeted attacks (not targeted at me, but like supply chain attacks kind of targeted). Claude code bundles bun in the binary I think, so using claude code at all means there’s still an exploit vector if there’s a serious issue found.

Found via: https://www.youtube.com/watch?v=AjfrlcmObus