This is a companion discussion topic for the original entry at https://www.youtube.com/watch?v=eQqtdN4As_A

Script

I really like Eli Goldratt’s philosophy, the Theory of Constraints. What’s it about?

Goldratt’s goal was to teach the world to think. He studied physics and thought the methods of the hard sciences should be used in other fields too. He developed ideas about thinking methods and applied them to business management. He thought businesses could get huge benefits from more rational, rigorous, organized thinking. He was a successful business consultant and sold millions of books.

Theory of Constraints, or TOC for short, says we need to focus our attention and efforts on the most important issues. To do this, look for constraints, a.k.a. bottlenecks, which are parts of a system that limit its throughput or success at your goals. Most factors in a system have excess capacity – they aren’t the weakest link – so improving them won’t result in better system performance. Find the factors that are limiting your results and focus on improving those.

Don’t optimize local optima. That means avoid improving individual parts of a system that won’t lead to better results at your goals. Most parts of a functional system are already good enough.

TOC also has ideas about problem solving, conflict resolution, contradictions, simplicity and much more. I’ve found TOC’s ideas really helpful for creating a deeper understanding of epistemology. Goldratt emphasized a lot of concepts that Karl Popper didn’t write about, and his ideas are mostly compatible with Popper’s, so the two thinkers complement each other well.

I wrote an essay covering around twenty TOC ideas. This video will discuss a few key ideas from the essay.

The Goal

Goldratt’s most popular book, The Goal, is a novel about the manager of a struggling factory. He improves the factory and illustrates TOC thinking methods and concepts.

The book emphasizes figuring out what your goal is, then figuring out what limiting factors are reducing your success at your goal. Most potential improvements won’t actually help with your goal much because they don’t address limiting factors, a.k.a. constraints or bottlenecks. Related to this, he explains why equal capacity for each workstation is the wrong way to design a factory.

Constraints

Marris Consulting focuses on TOC. Their logo illustrates a constraint with water flowing down through tanks.

Our goal is to get water to the bottom. The middle spout is the constraining factor for water flow. The other spouts have excess capacity, so making them bigger wouldn’t increase the output of the whole system.

If you look at each spout individually, making it bigger seems useful. But that’s the trap of local optima. Looking at the bigger picture, we see that improving some spout sizes won’t help with our goal.

Focusing Steps

TOC’s five focusing steps are a method for making improvements.

First, find the constraint. Then optimize it. Stay focused and don’t optimize other parts of the system.

If you need further improvement, organize the whole system around the constraint. Change other parts in ways that make things easier for the constraint. Doing two hours of work somewhere else to save an hour at the constraint can be efficient.

To improve further, increase the capacity of the constraint. Notably, don’t do this earlier. It’s usually more efficient to make other changes first.

After adding resources to the constraint, check if it’s still the constraint. If the constraint moved, return to step one.

Changing Steps

Changing can be a lot of work. Especially when a lot of people or money is involved, we want to make the right change on our first try.

Goldratt’s method begins with considering what to change. You want to pinpoint core problems related to constraints. You can use the effect-cause-effect method explained in my essay.

Second, consider what to change to. Look for simple, practical solutions. You can use Goldratt’s evaporating clouds method from my essay.

Third, changes need to be done in the right way. One good approach is using the Socratic method. You can ask questions to help people figure out the change themselves. If you just tell people your answers, they often won’t create enough understanding to implement the change well.

Balanced Factories

A balanced factory design tries to avoid excess capacity in order to be efficient. And it tries to avoid having a bottleneck or weakest link by giving every part of the factory just the right amount of capacity.

Goldratt’s insight is that balanced factories are inefficient. The main reason is statistical fluctuations. Production won’t actually proceed in an orderly, balanced way due to random variations in output. A worker, who averages producing ten widgets per hour, will sometimes produce zero, five, fifteen or twenty.

Also, the costs for different kinds of production capacity vary. We don’t need to carefully minimize the amount of capacity for cheap stuff. We should focus our attention and optimization on the expensive types of capacity.

Statistical Fluctuations

When you have a chain of dependencies, and statistical fluctuations, bad luck propagates down the whole chain, and good luck doesn’t cancel out prior bad luck. This is a mathematical fact that can be simulated on computers. It can also be understood with the simple matchstick game from The Goal.

What’s the solution?

A factory’s constraint should have a buffer in front of it with extra input parts so it never has to waste time waiting when there’s a delay at a workstation that comes earlier in production.

And each workstation besides the constraint should have excess capacity.

Workstations before the constraint need to work faster than the constraint to catch up and refill the buffer when bad luck depletes it. That means they have unequal capacity compared to the constraint. When the buffer is full, they should work slower or halt work part of the time. Although it may be counter-intuitive, that’s efficient.

Workstations after the constraint need excess capacity so they can keep up when there’s good luck at the constraint. That also means they won’t operate at maximum capacity most of the time.

Conclusion

The world is complex and you can read TOC books for more details. But, generally speaking, real situations involve a small number of key factors and a large number of other factors that have excess capacity and don’t need to be improved. A system where a large number of factors all needed lots of attention and optimization would actually be unmanageable. To be effective, we need to focus our attention on a few things.

My essay has more TOC ideas that this video didn’t cover. The link is below.

I don’t know how to make the YouTube thumbnail update here.

The title is the file name, I think because I sent the video in a DM here and it got cached then. The correct title was set before this topic was created.

And for the image, I’m using YouTube’s new feature where you upload 3 thumbnails and it tests out which performs best. I put the anime one first without thinking it mattered, but then I realized that was a mistake and the first one seems to get used as the default in some places so I changed it to a more plain one first, but it didn’t update here.

Based on Lazy loading YouTube videos doesn't update video thumbnail - Support - Discourse Meta I’m not very hopeful about fixing this, but if anyone figures out a troubleshooting step to try, let me know.

Also I realized that sometimes YouTube doesn’t display the full thumbnail so text near the edges can be a bad idea. Oh well. I’ll try to remember that next time.

Thanks to @LMD for helping with the audio quality for this video. (If the audio seems worse to you than previous videos in any way, please tell me!)

In YouTube comments, ActuallyThinking asked:

TOC seems to be a very good mental model and framework for identifying problems and fixing material inefficiencies. It seems to not say much about concept formation or how knowledge is acquired which are the traditional concerns of epistemology. I am, therefore, a bit confused about Goldratt’s ideas creating “a deeper understanding of epistemology” for you. To paraphrase Rand, epistemology is concerned with knowing “how” one knows and not with “what” one knows. Would you say that epistemology is both the “how” and the “what”? If so, it seems to conflate the physical sciences and philosophy which seems to me as a distinction worth keeping.

I replied:

Goldratt doesn’t talk about concept formation and not much about traditional epistemology. I was able to take many of his ideas and apply them to my own epistemology to improve it. I, using my own initiative not just passively listening to Goldratt, was able to make his ideas relevant and useful. This comes up a fair amount in some of my existing CF essays and I plan to cover it more in the future.

He replied:

Thanks for the response. I am semi-familiar with your work and your improvements on CR but I need to better understand you and the ideas before I can give some commentary. I have been grappling a bit with some parts and will come back when I have better formulated questions. Thanks for your efforts.

The title and thumbnail updated for me. I don’t know when or why.

The reply below is kind of disorganized cuz i’m analyzing the article sometimes but other times I’m relating it to me:

Knowing what the important issue/s would be good. Like, I wonder how often important issues are addressed in general. I think I talked about this before in another post.

The words, “most important issues,” stands out cuz they seem to be key for making progress I think. I think for making good improvements.

The way this sentence connects to the last one:

“This” means “to focus our attention and efforts on the most important issues.”

I don’t think, “This” means to need to focus on our stuff on the most important issues.

The part after, “which” is telling us what bottlenecks are but also telling us that throughput is important. That’s cuz why even look for the bottlenecks to begin with? Also, the sentence talks about a system and parts of it which means that there is a system to look out for.

So the factors are part of that system that was mentioned in the last sentence. I think if you know what a factor is in a system then you can know more about them–that most of them have excess capacity.

The “they aren’t the weakest link” uses the phrase “the weakest link.” I think that’s similar to the phrase “weak link,” but I don’t know if they mean the same thing. But that part of the weakest link tells us what it means to have excess capacity. It means that that factor isn’t the weakest link.

I think the second clause of the sentence tells us about how to think of most factors in a system. If you improve them then the system doesn’t get better. I say, “get better,” but I think that’s kind of different than, “better system performance.” I think if you don’t get better system performance then that means your throughput isn’t going up and that may mean you didn’t find the bottkneck. That or maybe you’re not optimizing it right.

This sentence is telling us what to do with the factors that don’t have excess capacity. I think since most factors have excess capacity then only a few don’t have excess capacity?

The sentence tells us what kind of factors to find. It’s the factors that limit your results.

The sentence is made of two steps, you find the factors and then focus on improving them.

I think “the factors that are limiting your results” is another way to think of what is the weakest link.

Would be good to use local optima in my vocab to get a feel for what a local optima is. That way I can be like “oh, this is a local optima and that is a local optima.”

What I don’t know: Idk how to see local optimas in everyday life. I only see it with examples provided. Even then if I do, I question if im understanding it right. If I’m able to find local optima in an example or article, why not do it for everyday things? I don’t think im actually picking up a local optima. Maybe something that seems like it. Hardest thing of all is idk where to start identifying local optima, like idk how to use my understanding to know if I’m making an error or not.

I’ve seen improving local optima happen a few times. I think it’s when I try hard in one area but I don’t reach my goal. I think an example I’ve seen is in hero shooters. Both teams are killing each others’ backlines and even tho I attack their backline hard as a tank, they kill my backline first and then wipe my whole team. But, if I do something different and peel then we survive and may win the team fight. I don’t know how accurate or one to one that is to optimizing a local optima

Post mortem about why I don’t know if my example is a good example of a local optima.

Why don’t I know how one to one my example is to a local optima?

Because I don’t know what are the constraints and the parts of the system all that well so I don’t what a local optima is in that system. I know the goal kind of but not that well I think

Why don’t I know the parts of the system or constraints?

Shoot I forgot what a constraint is and if it has to do with raw numbers like a machine making 10 widgets an hour. Also, I don’t keep track of parts of a system cuz that’s a lot of numbers to keep track of.

I think an example in another area would be making in Mcdonalds. There are burger patties and some nuggets to be cooked. If an order of burgers comes through and I end up making nuggets instead then that is improving an individual part of the system that doesn’t lead to better results. Hm, now I’m wondering if ‘improving’ applies in the example or not. If this is even a good example cuz I’m just cooking the food. Does that equal improving?

conflict resolution:

z: do an example, talk about things correctly, solve the problem of making my example a good one

x: Define ‘improve’ so I know that cooking items to restock means I’m improving.

y: find if ‘improving’ means ‘optimzing’ cuz the sentence before the quote about talked about ‘optimizing’ not ‘improving’.’ If ‘improving’ means optimizing or similar then I’ve seen that ‘optimizing’ means that you can make things better for the constraint, like you don’t make other parts of the system cause a burden on it. I think if you have the nuggets wait before making the patties in the example then I think that’s optimizing the constraint.

In the quote above I see that it says something, like don’t improve parts of a system that doesn’t lead to better results. Does making any results count? What if you’re making 0 results? going from 0 to >0 is better. It’s numerically more. I think there’s probably a context where making any progress is good. It’s like figuring out how to do a skill at all. If you figure it out at all, that’s something you can learn to automatize. That sounds good. Like you’re on the right track probably. Idk.

Post-Mortem about why I don’t know if >0 as a result counts as a better result.

Why don’t I know if better results at your goals means >0?

Because I don’t know what the goal could be. I think if you’re literally getting better results at your goals which could be make some product then the result of >0 could satisfy your goal. I think from there you want to work your way up cuz how do you want of your goal?

It’s interesting that if the system is functional then most parts are good enough. Is a non-functional system a system that doesn’t have success at its goal? How much success tho? Idk.

Post Mortem about why idk how much sucess a system has to have for it to be considered functional.

Why don’t I know how much success a system has to have for it to be functional?

Cuz i don’t know if there’s a number for it to satisfy the criteria of “functional.” That and I don’t know what the meaning of functional is. That and idk if im all that right that if the system is functional then that means it’s accomplishing its goal.

Why am I not so sure that a functional system means that it has success at its goal?

Cuz idk if that’s the right line of thought that just cuz we’re talking about functional it doesn’t mean we’re talking about a success at a goal. I think functional is related to having a purpose.

It makes sense to me in a way that a functional system has most parts that are good enough cuz if it didn’t then I don’t think the system would be so good at having success at its goal. Does that mean if the goal is too high then many parts may seem like they’re not good enough? Idk what the line of thought is here

Post-Mortem about why I don’t know about the line of thought between most parts of a functional system and high goals.

Why don’t I know if there’s a line of thought between most working parts in a functional system and goals being too high?

Cuz I don’t know if talking about high goals is even the thing being discuessed.

Why?

Because i only see the part about a functional system having most parts that are good enough. That doesn’t necessarily mean I can start talking about the goal of the system.

Why not?

Just cuz the system is achieving a goal and it has most parts that are good enough and I want better reuslts, that doesn’t mean that I can talk about goals being too high.

Why not?

Idk i can’t name some examples of functional systems and their parts being good enough and what goals are set.

Local Optima

Say you’re a small business owner and you fire your best employee and replace her with AI to save money. There is a local optima here: you save money. You get a benefit. But in the big picture, what’s the goal? What’s the global optima? The success of the company. This decision could easily harm the company.

Local optima is about getting some sort of benefit but not taking the big picture into account.

If you raise prices, then you get more money for each sale. This is clearly a benefit. But is it a good idea overall? Maybe, maybe not. It depends. Will you lose a lot of customers? If you just focus on the local optima and assume it’s good, and don’t consider other stuff like downsides and what your goal is, that’s poor thinking.

Say you have a software algorithm that takes 15 minutes to run. You optimize some details and now it takes 14 minutes. So that’s an improvement. But maybe that’s a local optima. Maybe you should have changed to a different algorithm and then it would take 10 seconds. Optimizing the details of the slow algorithm was a waste of time. Or maybe this code runs once a month at night and 15 minutes is already fast enough. It could take a few hours and it’d be fine. So getting it to 14 minutes or 10 seconds are both a waste of time. It doesn’t need to be changed. Changing it won’t help the company make more money and it won’t make customers happier.

Excess Capacity

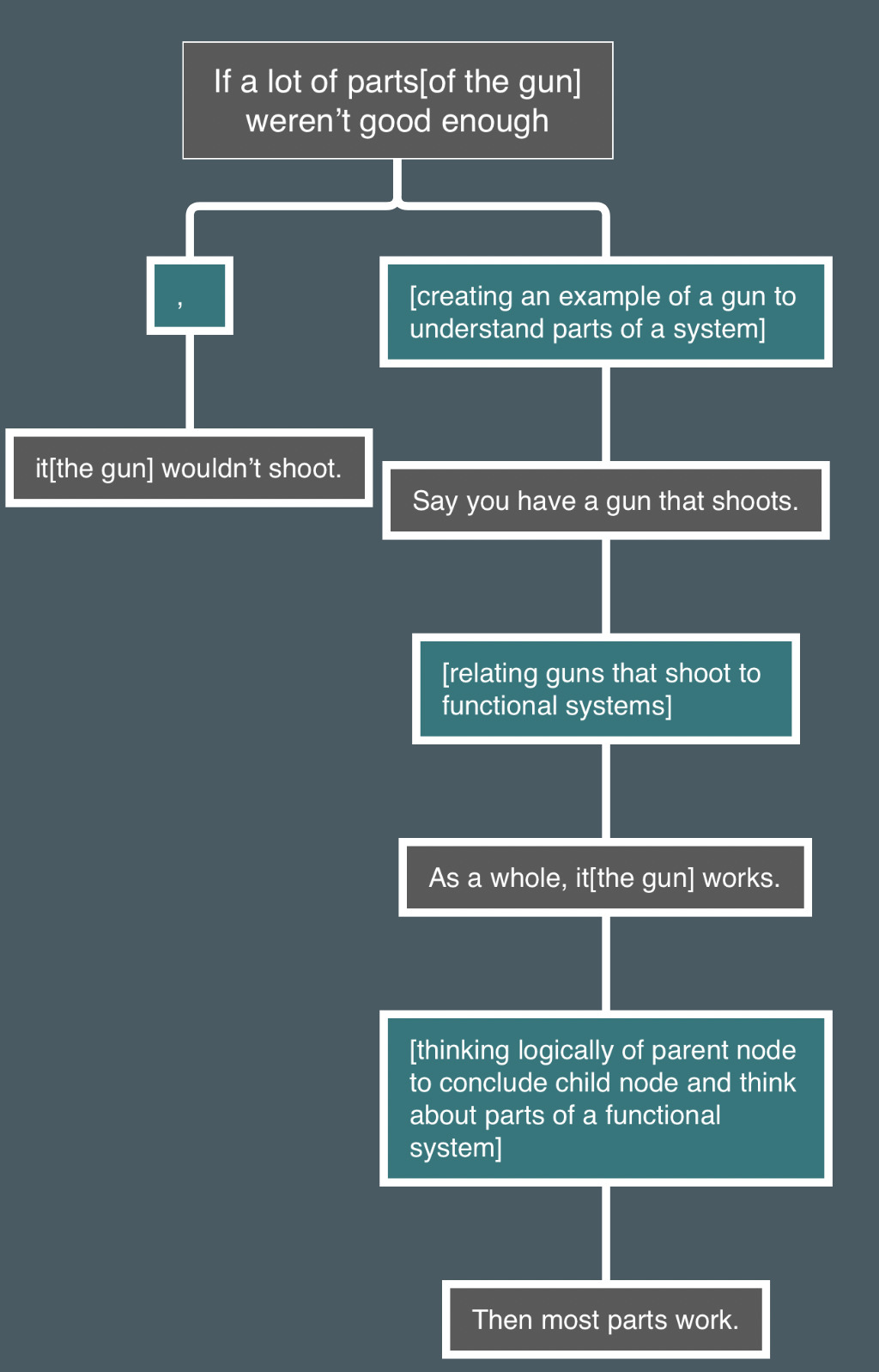

Say you have a gun that shoots. As a whole, it works. Then most parts work. If a lot of parts weren’t good enough, it wouldn’t shoot.

If you have a gun that does not shoot, then any number of parts could be totally broken. There’s no requirement for parts to be functional let alone have excess capacity.

If you go to the shooting range every week for a year and the gun works every time, then most parts of the gun have excess capacity. If a dozen parts were just barely good enough, then something would be very likely to break within a year. Random variance + small margin of error + 12 things = high chance something fails. If only one part is barely good enough, and all the rest have excess capacity, then it’s more realistic that it works for a year with no failures.

This type of analysis works for other things that have some kind of purpose/goal. Over time, random variance would cause failure if a lot of parts were just barely not failing. If it works reliably over time, then most parts have excess capacity. If it doesn’t work at all and has never worked (e.g. it’s incomplete, not done yet), then any number of parts could not work and not even be close to working.

@Eternity My two previous posts are examples of mini essays.

If only one part is barely good enough, and all the rest have excess capacity, then it’s more realistic that it works for a year with no failures.

If all parts have excess capacity or none parts only work barely, does the gun have a bottleneck? Is there always a bottleneck? I thought a point in The Goal is that you shouldn’t try to have none bottlenecks, the bottleneck would just move around wildly when you try to do that through balancing the plant. Is a gun different from a plant because the plant always tries to improve while the gun is made and then its done? The gun design could continually improve so there would be bottlenecks in the design, but that’s different from a gun which is already made?

Is a gun different from a plant because the plant always tries to improve while the gun is made and then its done?

Basically yes. If you were satisfied with producing 100 widgets/day, you could have tons of excess capacity at everything and no bottlenecks. But if you try to maximize production then there will be a relevant weakest link somewhere in the factory.

Say you’re a small business owner and you fire your best employee and replace her with AI to save money.

When you bring up this example I automatically think that I as an owner am improving local optima, but a few sentences later you ask what’s the goal? So i think im missing the point or doing something extra trying to understand local optima.

It’s hard for me to identify the local optima cuz im asking why is firing the employee saving money? What is saving money identified as? I don’t immediately see that it’s a result and that relates to local optima

Post-Mortem about why I’m not identifying results and local optima

Why don’t I see that saving money is a result and may be a local optima?

Becasue I’m not looking for results or mini goals, not in first example sentence.

Why?

Because I’m not looking for prepositions like “to” and prepositional phrases that may indicate a goal or a benefit or result. If I look for those then I can see that there’s a result and can relate that to local optima. I think I just have trouble seeing that the result or benefit is a local optima or not. I think I’m supposed to think of the goal to see that if it’s good in the bigger picture.

But in the big picture, what’s the goal?

It’s hard to see that the goal matters when identifying local optima. Like, it doesn’t make sense to me that when I think of a result or benefit that I should be thinking about the goal. I don’t think it makes sense to me cuz I don’t manually think of goals when thinking how good a result is. I think it’s very automatic.

What’s the global optima?

I think you ask this cuz firing the person was local optima. We should instead find the global optima for better results.

The success of the company.

I thought the the above quote was a global optima. It looks like a goal. What I’m questioning now is if I know what global optima means and if it could also mean goal. Maybe I’m misidentifying the above quote, and that it may actually be a global optima, a part of the system that if changed leads to better results. Now, I’m wondering if the last sentence is correct. That’s cuz I don’t think I read the part that talked about global optima yet. I’m assuming global optima is the opposite of local optima. If local optima is… I forgot what local optima is.

I looked it up and I saw that it’s improving individual parts of the system that doesn’t lead to better results… Ok, I reread the quote again and saw that I messed up and local optima is actually the parts of a system that are already good enough or have excess capacity. Improving local optima won’t lead to better results.

Ok, I have a lot of questions about how I’m reading the material and interpreting it and why I’m messing up. That or why I think I’m messing up.

This decision could easily harm the company.

The decision could easily harm the company because maybe the person covers for a lot of employees and firing them now has the owner lose someone important. Maybe firing them was bad cuz now there’s less manpower in the company. Less manpower can make things harder for everybody.

I’m guessing that if you think about what’s already good enough in the company, then you can find that firing the person changes those local optima into something else.

Imma think of local optima that could be changed that harms the company:

- what could be good enough is people covering others for their break

- how much manpower there is per work station

- enough of an item being produced by that person

It’s kind of hard to think of local optima.

Post-Mortem about why it’s hard to think of local optima

Why is it hard to think of local optima examples on the fly?

cuz im not thinking about things that could be good enough or things that are made in numbers

Why am I not thinking of things that could be good enough?

Cuz I don’t know what part of the company’s system there could be. Like, I didn’t list them all out

Local optima is about getting some sort of benefit but not taking the big picture into account.

There for sure is a benefit when improving local optima. It just doesn’t increase throughput i think.

I’ve seen the phrase ‘take the big picture into account’ and that was always kind of hard to visualize or think of correctly. Like, ‘I ask myself is this a big picture thing or a small picture thing?’ I wonder if big picture is related to the goal. Say a company has one machine produce material for the next two machines to mess with and create widgets. The small picture things could be the rate of the individual machines making material and producing widgets. And maybe the big picture stuff is how many widgets are sent out of the factory in total?

I think I should look up what big picture and small picture mean. I thought I could use logic to find the meaning.

If you raise prices, then you get more money for each sale.

If the global optima is the success of the company, then more money for each sale doesn’t necessarily mean the global optima is being achieved.

What is the success of the company? I think that may mean the company is making a profit or isn’t losing employees at a bad rate or isn’t making health violations.

This is clearly a benefit. But is it a good idea overall? Maybe, maybe not. It depends.

The benefit that’s clear is more money per sale. Numbers are going up for the company. But for how long?

When you’re thinking of good ideas overall, it means to think of the big picture. I’m guessing that means look at the global optima or maybe the overall goal.

The reason why the clear benefit could be or could not be good is because, if you didn’t think of the downsides and are not seeing that many bad ones, then it’s fine. Why tho leave it up to there maybe being downsides or not?

If you just focus on the local optima and assume it’s good, and don’t consider other stuff like downsides and what your goal is, that’s poor thinking.

It’s poor thinking cuz all those four actions can be helped. Doing those four at the same time I think is saying, respectively, ‘idk what the local optima are here,’ ‘idk what a good overall idea for the company is,’ ‘idk what the downsides are or how bad they could be if I do,’ and ‘idk what the goal of my company is or idk what the goal of company has to do with improving stuff.’

Optimizing the details of the slow algorithm was a waste of time.

Ok, I did not see that. Not until ET said it. In the sentence before the above quote, ET talked about changing to a different algorithm and it now taking 10 seconds for the program to run. I didn’t pick up on those clues to lead me to conclude the quote above. That’s before reading the quote. I had to read the stuff before the quote again to make sure that optimizing the details of the old algorithm was a waste of time. That’s if the goal is to make to make the software algorithm run a bunch of times

Or maybe this code runs once a month at night and 15 minutes is already fast enough.

It being 15 minutes is already enough cuz why stress about it being 15 mins vs 10 seconds? Is the algorithm crashing or something?

Or maybe this code runs once a month at night and 15 minutes is already fast enough. It could take a few hours and it’d be fine. So getting it to 14 minutes or 10 seconds are both a waste of time. It doesn’t need to be changed.

Ok, I think I see why I didn’t read a conclusion like the second to last and last sentence. It’s because I wasn’t thinking, “what is the global optima,” or maybe, “what is the goal?” That stuff checks if something is a waste of time or not.

Changing it won’t help the company make more money and it won’t make customers happier.

I’m thinking making more money and making customers happier could be global optima. It’s cuz when you improve local optima, you’re improving stuff that already has excess capacity. Worrying about making more money or making the customers happier seems to be needing improvement. I forgot if that means that those are global optima or the goal.

Post-mortem about identifying global optima or goals from the last sentence:

Why don’t I know if making more money or making customers happier are global optima or goals?

Cuz I haven’t read much about what the goal is in TOC.

Why?

Cuz i don’t remember the goal being explained very much?

Why don’t I remember?

Cuz I was focused more on the other stuff like local optima or factors that have excess capacity. I thought that stuff needed more attention to learn.

Why do I think those need more attention to learn?

Cuz if I don’t know what local optima are then I can’t even think about most factors of a system.

Ok, why not focus on other factors that need more attention?

Idk i thought I could get away with not learning those yet. Not until they came up.

Excess Capacity

Say you have a gun that shoots. As a whole, it works. Then most parts work. If a lot of parts weren’t good enough, it wouldn’t shoot.

Here’s a paragraph tree I made about the quote above:

color codes for nodes:

paragraph tree:

I now think the root node is a condition that if met makes its left child node true. I didn’t think a whole lot about what the root node was. That was what I thought automatically.

The first sentence talks about a gun that shoots. That’s a functional system. The goal could be I think that it shoots. I think the reason why the second sentence talks about the gun as a whole is because you can think of it as a functional system.

The third sentence talks about how to look at the system. If the system works then that means most parts work. The goal is being met. I think that’s what’s being talked about.

The fourth sentence talks about how a system that’s not functional would look like. The first clause talks about the condition of lots of parts not being good enough. That’s the topic of local optima or parts of the system having excess capacity. The second clause of the sentence talks about what a non functional system would look like: It wouldn’t meet its goal.

If you have a gun that does not shoot, then any number of parts could be totally broken.

I wonder if “any number” also includes zero. Zero is a number. That I think means that 0 parts could be totally broken and the gun wouldn’t shoot. I also think that cuz “totally broken” is definite. What about kind of broken? I don’t know other adjectives to use.

There’s no requirement for parts to be functional let alone have excess capacity.

So I think if a gun doesn’t shoot and that that goal isn’t being met then there’s no requirement for parts to be functional. Excess capacity means more than enough I think so. I don’t know why the above quote is talking about requirements. Are we talking about requirements of a system that’s not functional?

Post-Mortem about why I don’t know what the second sentence is talking about:

Post-Mortem:

Why don’t I know what the second sentence of the above quote is talking about?

Cuz I don’t know if it’s referring to the broken gun or if we’re now talking about a functional system.

Why don’t I know?

Because I don’t how the two sentences connect. I guess that’s not automatic for me yet. I thought it would be.

Also I don’t think I’m taking the second sentence at face value enough. Like, what does it mean that there’s no requirement for parts to be functional let alone have excess capacity? Am I having trouble knowing what ‘requirement’ means? My guess is that ‘requirement’ is a condition to fulfill. If that’s fulfilled then whatever required something could be true. I think the sentence is saying that that requirement isn’t even needed. I’m pretty sure a functional weapon needs parts to be functional or have most parts with excess capacity. That leaves the gun that can’t shoot. The gun that can’t shoot doesn’t have such a requirement.

If you go to the shooting range every week for a year and the gun works every time, then most parts of the gun have excess capacity. If a dozen parts were just barely good enough, then something would be very likely to break within a year.

Had to read that a second time cuz I thought we were talking about using the gun once a year.

The first sentence talks about how to think of parts of a system. If you can use the gun often for a year then many parts of that system have excess capacity. They can withstand a lot I think.

The second sentence talks about parts of a system having excess capacity vs. parts being just good enough. I think the latter part of the versus means parts goes past a threshold half the time or something.

Random variance + small margin of error + 12 things = high chance something fails.

first part of the equation is i think how much value each part produces. I think that’s like thinking of a machine that makes widgets less than its capacity or more or equal to. It can vary. I think the small margin of error means that if a part makes less than it has to, then it will fail or something. Idk. The 12 things are parts that are barely enough I think. That’s a lot of things with a small margin of error. That and like all things I think they deal with random variance.

This type of analysis works for other things that have some kind of purpose/goal.

I think when you say “This type of analysis” you’re talking about finding out if a system has parts with excess capacity, barely enough capacity(?), or not working in the first place or not being close to working.

You can find if a system is functional or not. In a non functional system, the system has parts that don’t even work in the first place. How many? idk. I think it would be important here to talk about how there’s not a requirement for parts to be functional or let alone have excess capacity. In a functional system, most parts can have excess capacity or maybe they can have parts that are barely good enough. How many parts? Idk but that seems kind of like a spectrum.

Good job working on close reading to understand more than you would if you just read it once quickly.

Thank you. In a way, I tried reading quickly this time by reading the sentences fewer times and taking less time making responses. That lets me read more and respond to more sentences. I jus tried using some analysis quickly to see if that worked.

TOC’s five focusing steps are a method for making improvements.

It’s interesting that the steps are called “focusing steps.” I wonder what that means. Like, are we focusing our attention to the most important issues? Kinda. I think maybe focusing our attention on the system to improve it? I saw gemini say it was something about focusing our efforts to improve a system. Something like tht.

Making improvements sounds important to define i think. Cuz maybe even the slightest gain is an improvement on something. I think the improvements depend on the goal– the goal of the system i think?

conflict resolution:

z: know what “making improvements” mean

x: making improvements means making even the slightest improvement. I say that cuz a small improvement is still an improvement.

y: “making improvements” means making improvements according to the goal. Why according to the goal? Cuz in TOC the goal is important and you make improvements to reach the goal I think. Why reach the goal? I think that means making throughput. I forgot how to use throughput in a sentence

w: “making improvements” means improving stuff in a system. What stuff? I mean like better machines(i.e. parts of the system).

First, find the constraint.

If you know what a constraint is then you can identify it. By that I mean if you know what’s the slowest part of the system… Wait, I forgot what the constraint is. The constraint can literally be a part that doesn’t have excess capacity. That or it may not even be barely enough. I have to look at past posts to remember what a constraint is brb.

Ok, I remember that the constraint is related to throughput. Improving the constraint will increase throughput. We will get more of the goal I think? Maybe we’ll reach our goal? Our new goal? I’m unsure. I didn’t review all that much besides the original post’s topic about constraints.

Ok, I looked at my reply in another post where I analyze the Constraints topic. The constraint limits throughput. If I know what “throughput” is then I can know what the constraint does to it. If throughput is a number then that means that the constraint stops the number from going up. I think if I also learn what “limits” means then I’ll know how the constraint interacts with throughput.

In the Marris Consulting example, the constraint which is the middle spout limits throughput. The throughput there is the amount of water flowing through. I think? Let me look at the video real quick to find out.

Ok, I saw the video again to understand what the goal was. The goal was to get water to the bottom. In the following quote of the Constraints topic:

Our goal is to get water to the bottom. The middle spout is the constraining factor for water flow.

Water has to get to the bottom to reach the goal. How much? And by what time? Since, the first sentence says get water that might mean like any amount of water I think? It may mean all the water? Also, since the second sentence talks about water flow, that means we’re talking about how much water passes over a certain amount of time. The throughput may be how much water flows down over time at the last spout; that’s where water reaches the bottom. I should look up what “throughput” means.

From Introduction to Theory of Constraints:

Success at our goal is called throughput, which means moving resources through a system to a goal at the end.

If you have success at a goal that’s what’s called throughput. If the goal is to graduate college with any associate degree, then accomplishing that is throughput.

Wanting to get a passing score on the test is the goal. Getting a passing score is throughput. The passing score means the goal was accomplished.

In the Marris Consulting example, if the goal was to just get all the water down the drain then, in a way, the time it takes doesn’t matter. Whatever spout size we use that lets all the water pass through after a certain amount of time will be ok. After all the water passes through, we can can identify that as throughput. If the goal was to get water to the bottom at a reasonably quick time then it would be good to increase the correct spout sizes. We’ll get a good water flow that way. Let’s say we want to drain 30 L of water to the bottom in one minute. We’ll be using the spouts in the Marris Consulting example, but those spouts right now drain the water in two. That means our goal is to have the water drain at a speed of .5 ML every second. When you increase the spout that was the constraint, the speed you wanted and got is the throughput. You want water to drain at a certain rate and now you have that rate. You’re successful at your goal.

My questions about the paragraph above:

Is there a certain size of spout that’ll have the water take an infinite amount of time to pass through?

In the third sentence, is all the water passing through success at our goal? Does “goal” mean making an idea to do something and its success is actually doing it? Maybe it’s passing a checklist or a criteria.

In the third sentence again, is identify a good word to use? I think I want to say all the water passing through is called throughput.

Is saying, “draining 30L of water every minute” different than saying “draining 30L of water in one minute?”

Is the second goal of paragraph a reasonable goal? Like, was it good to show my understanding about what throughput is? I’m doubting it